Martin Fowler’s Test Pyramid has served the industry well for years. It tells you which types of tests to write (unit, integration, end-to-end) and roughly how many of each. The pyramid is intuitive: a wide base of fast unit tests, a narrower middle of integration tests, and a thin top of slow end-to-end tests.

To be fair to Martin, the pyramid was never intended to describe test intent, only distribution. But in practice, teams use it as a proxy for both, and that’s where things go wrong. The pyramid doesn’t tell you what your tests should actually do, or what they’re for. It doesn’t say anything about design. Teams end up with thousands of tests that mock everything and verify nothing, or a handful of Selenium tests that break whenever the CSS changes.

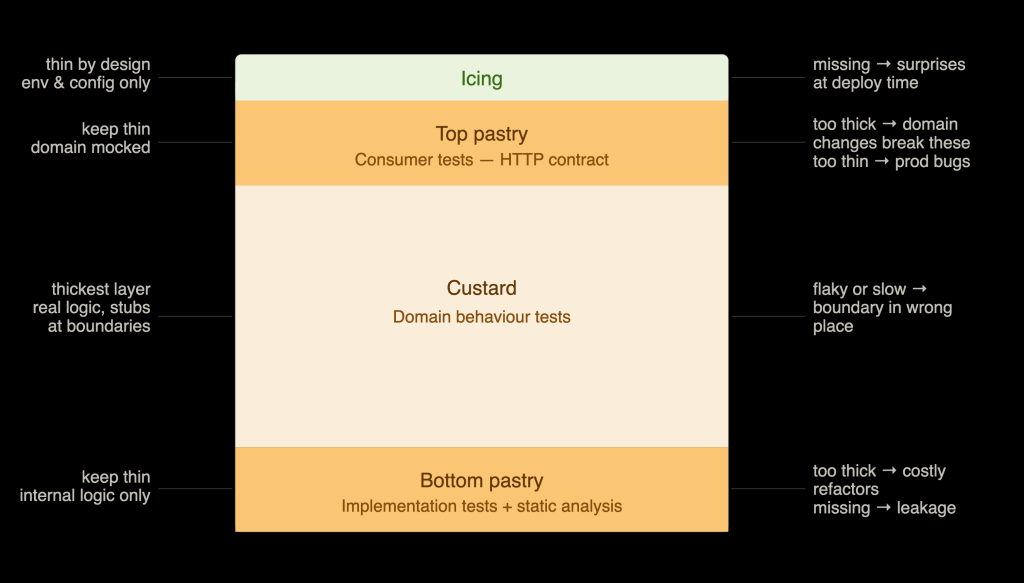

The Custard Slice is a different way of thinking about testing. Instead of categorising tests by their technical scope, it categorises them by the value they provide, and uses the physical properties of the slice itself to encode the rules, including how many tests belong in each layer.

The Custard Slice, like the Test Pyramid, is a model, and all models are wrong in some circumstances. I’ve used the Custard Slice exclusively for several years now, but with full awareness that I spent plenty of time before that working with the pyramid. It can be a replacement, but it doesn’t have to be. Understanding the merits of both, and knowing which fits your context, is more valuable than picking a side.

The metaphor

A custard slice (or vanilla slice) has four distinct layers:

- Bottom pastry: firm, structural, foundational

- Custard: rich, thick, the reason the whole thing exists

- Top pastry: crisp, structural, the outer boundary

- Icing: thin, decorative, tells you everything is in order

Each layer is necessary. When a layer is the wrong thickness, too thick or too thin, a custard slice isn’t as enjoyable to eat. That wrongness is often the same kind of pain we experience when software is tested incorrectly.

Each layer represents a what we’re testing, and its thickness represents how much of your system it exercises. A thicker layer means more code is under test. A thinner layer means less.

Crucially: the goal is not a perfectly uniform, machine-produced slice. A rustic, slightly imperfect custard slice is often more pleasurable than a mass-produced one. What matters is that each layer is present, purposeful, and appropriately sized, not that they conform to exact measurements.

The bottom pastry: implementation tests

The bottom pastry represents what many developers default to calling “unit tests”: tests of internal logic and implementation detail. This includes static analysis, linting, and type checking.

These tests are tightly coupled to structure. If you refactor a significant area of code that has heavy bottom-pastry coverage, a lot of those tests will need to change, not because the behaviour changed, but because the implementation did. That’s the defining characteristic of this layer, and it’s why it must stay thin.

When it’s too thick: The pastry gets in the way. Refactoring becomes expensive and painful. Tests require constant maintenance that yields no additional confidence in behaviour. Developers start to fear changing code.

When it’s too thin or missing: The custard falls out. Certain areas of the codebase are genuinely complex enough, with enough internal permutations, that testing every path through the outer layers is more trouble than it’s worth. Pure algorithmic logic, complex transformations, intricate state machines: these benefit from targeted implementation-level tests. Without them, that complexity leaks upward and becomes harder to reason about.

The bottom pastry is a necessary evil. It earns its place where combinatorial complexity makes behaviour-level testing impractical. Everywhere else, it’s dead weight.

The custard: domain behaviour tests

The custard is the most important layer. It’s thick, rich, and the reason the whole thing exists. These tests describe what the software does in terms of the problem domain (the value it provides to real users), not how it does it internally. A useful rule of thumb: a custard test runs the domain as a black box, with only external systems replaced.

A domain behaviour test should survive a significant refactor of the implementation layer without modification. If it doesn’t, the test is testing the wrong thing.

What the custard tests are not

Domain behaviour tests are emphatically not end-to-end tests. They do not spin up a browser, hit a live environment, or make real network calls. All outbound HTTP traffic is stubbed. Third-party integrations are replaced with test doubles. Event buses are treated the same way: you stub the outbound, you don’t exercise the real infrastructure.

Where third parties are difficult or impossible to stub (for example, something communicating over a binary protocol rather than JSON), the outer test boundary is replaced with an appropriate adapter that can be substituted in tests.

What the custard tests are

In-process, fast, and comprehensive. Running an in-memory database is generally trivial today, and it’s actively encouraged here. The domain should run with real persistence where that’s cheap, and with stubs only where it’s necessary.

These tests exercise a significant portion of the real codebase. The depth of the custard is real depth: actual domain objects, actual business rules, actual persistence, just with clean, controlled boundaries at the edges.

When the custard is wrong

Because tests exist primarily to support change, problems in the custard manifest as friction around change.

Too thick or poorly shaped: Tests become flaky or slow. This is almost always a signal that the boundaries are in the wrong place, not that there are too many tests.

Too thin: Bugs appear at the outer boundaries. The integration layer reveals gaps that should have been caught in the domain. When this happens, the outer test boundary is in the wrong place: it’s compensating for missing domain coverage.

The top pastry: consumer tests

The top pastry is about one thing: ensuring that your domain’s behaviour can actually be consumed in the ways that matter to the people and systems calling it. It’s not enough for the domain to be correct if the interface in front of it is broken, misshapen, or impossible to call correctly.

In a typical REST application, a consumer test calls a route but mocks the domain entirely. The test is not interested in whether the domain logic is correct (that’s the custard’s job). It’s interested in the contract at the boundary: does the route accept the right inputs, construct the right DTO, hand it to the domain correctly, and then transform the domain’s response into the right HTTP status code and response body?

This is worth distinguishing from what many teams call “API tests”. In practice, API tests are almost always fully integrated end-to-end tests dressed up with a different name: they hit a real running service, exercise the real domain, and talk to real infrastructure. That makes them slow, fragile, and expensive to maintain. The consumer layer in the Custard Slice is specifically designed to counter that pattern. The domain is mocked. The test is fast. It verifies the surface, not the depth.

From a design perspective, this layer enforces a healthy separation. The domain should never be aware of HTTP. The route should never contain business logic. The consumer test makes this separation legible and enforceable.

This model also assumes that the correctness of third-party integrations is verified separately, through contract tests, provider guarantees, sandbox environments, synthetics or better still, testing in production and observability, rather than folded into the consumer layer.

When it’s too thick: Domain-level changes start breaking consumer tests. The tests know too much about the internals. This is a clear signal that business logic has leaked into the routing layer, or that the consumer tests are asserting on things that belong in the custard.

When it’s too thin or missing: Integration bugs appear in production. A developer made an assumption about how a route could be called, and that assumption turned out to be wrong: wrong status code, wrong response shape, missing field. These are the bugs that are embarrassing to ship and can be expensive to diagnose.

The icing: deployment verification

The icing is thin by design, and it has a specific job that is easy to conflate with testing but is actually distinct from it: deployment verification. It does not test correctness. It does not exercise domain logic or verify business behaviour. It answers one question: is this application actually running and reachable in this environment?

In practice, icing tests look something like this:

- The login page is reachable

- The health check endpoint returns successfully

- Logs are being sent to the observability platform

- The database is accepting records

- There are no exceptions on startup

That’s it. The icing is thin because it needs to be. It’s not a substitute for the layers below it: it’s a final sanity check that the layers below it are operational in production.

Hang on, what about the actual user?

Everything described so far applies equally to frontend code (web apps, native apps, games). The model doesn’t change. The layers are the same, the thickness rules are the same, and the failure modes manifest in the same ways. What changes is where the boundaries sit.

The behaviour layer: test what the user does, not what the component is

Libraries like React Testing Library and Vue Testing Library are the custard of a frontend application. They were designed around a specific philosophy: test your UI the way a user interacts with it, by finding elements through accessible roles, labels, and visible text, not through component internals.

This distinction matters. An over-reliance on test IDs, CSS classes, or component structure is the frontend equivalent of testing implementation. Those tests are brittle: they break when you refactor markup without changing behaviour, and they provide no signal about whether the interface actually works for a real person. Lean on them too heavily and you have thick bottom pastry disguised as custard.

The custard here is: render a component or a feature, interact with it the way a user would, assert on what the user would see.

The consumer layer: browsers, devices, and operating systems

The top pastry for a frontend application is Playwright or Cypress: tests that exercise the rendered surface of the application across real browsers, devices, and operating systems.

The important constraint is the same as the backend: if you run these tests against the full application stack, you’ll inevitably hit the “pastry too thick” problem. The consumer tests start failing when domain logic changes, not because the rendering is wrong, but because they know too much. The domain should be mocked or stubbed, just as it is in the backend consumer layer.

What these tests are actually verifying is narrower than most teams assume. The era of needing extensive cross-browser test suites to verify that JavaScript executes correctly is largely over; browser runtimes have converged. The consumer layer today is principally about visual rendering, layout correctness, and device-specific behaviour. Does the component render correctly on a small screen? Does the font load? Does the interactive element actually respond?

A simple example

Imagine a checkout form in a React application.

Bottom pastry: A unit test for a pure calculateTax(price, region) utility function, testing every edge case and region combination at the implementation level, because exercising all of those permutations through the rendered form would be impractical.

Custard: A React Testing Library test that renders the checkout feature, fills in a delivery address, and asserts that the correct total is displayed and the submit button becomes active. Real logic, real state, no browser required.

Top pastry: A Playwright test that loads the checkout page in Chromium, Safari, and on a mobile viewport, confirms the form renders correctly and the submit button is reachable , with the backend API mocked.

Icing: A smoke test that hits the deployed environment, confirms the checkout page loads, and verifies that the payment provider configuration is present and reachable.

Why this model is useful now

The Custard Slice is opinionated about design, not just testing. It implies clear separation between domain logic and transport, between behaviour and implementation, and between what your software does and how it’s deployed. Each layer enforces a different kind of boundary, and violations of those boundaries show up as symptoms in the layer that’s wrong.

This matters more than ever as AI writes more of our code. Code generation is fast, but it produces implementation at scale, and without a clear mental model of what each test is for, teams end up with enormous test suites that provide false confidence. The Custard Slice simplifies the question: which layer does this test belong to, and is the layer the right thickness?

When something hurts (tests that break on refactoring, slow suites, integration bugs in production, deployment surprises), the metaphor tells you where to look.

I’ve built and shipped umpteen features with and encouraged a whole host of engineers to use this model and it works better than I could’ve imagined. It’s not perfect and it’s not meant to be. It’s a means to make decisions and communicate intent and in my experience, it does that pretty well.